Inktober is an annual art challenge where participants create and share one drawing every day to improve their artistic skills and inspire creativity.

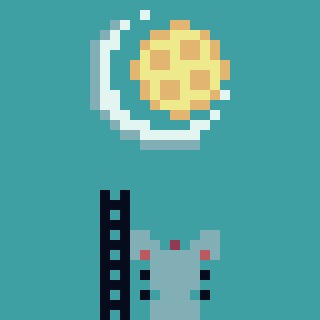

In the master’s course Computer Graphics & Computer Vision, students implement vertex and pixel shaders. Here, I used them to color Coraline’s eyes that are following the jumping mouse, which brings her through the tunnel to the Other World.

Inktober is an annual art challenge where participants create and share one drawing every day to improve their artistic skills and inspire creativity.

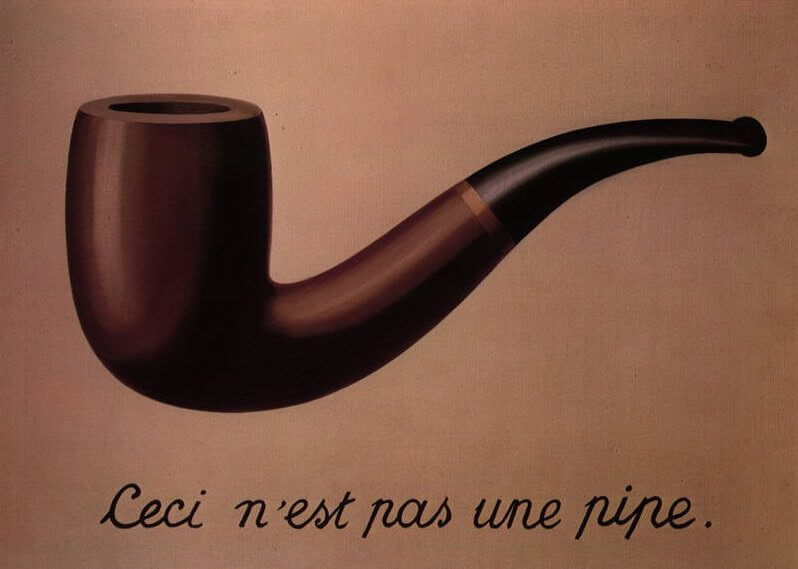

The Treachery of Images, 1929, by Rene Magritte challenges us to recognize the limitations of representations. The painting urges us to understand the distinction between representation and reality - a key theme in the field of neural decoding.

The Treachery of Images, 1929, by Rene Magritte challenges us to recognize the limitations of representations. The painting urges us to understand the distinction between representation and reality - a key theme in the field of neural decoding.

Neural decoding involves the translation of neuronal activity into meaningful representations, such as images, language or sounds, so that we can interpret the information encoded in the brain. While we may be excited by the potential of this pioneering field, it’s also important to remain grounded. There are inherent limitations and potential biases that may arise within the decoding process that we must consider. As Alfred Korzybski famously said “the map is not the territory” we seek to explore.

Light, upon entering the eyes, triggers a domino effect of electrical impulses in the visual stream of the brain. This process effectively encodes the visual stimuli from our environment into neural signals. The objective of decoding is then to model the reverse transformation from the neural responses back to the real-world concepts they represent. Let's acknowledge a fundamental truth: no matter how sophisticated or refined, models are simplifications of reality. These models, while offering significant insights, are essentially approximations that attempt to capture the complexities of a vast system using a set of assumptions and measured parameters.

Forgetting that the map is not the territory can lead us into the traps of reductionism and oversimplification.

Let’s consider an illustrative example:

Imagine researchers have developed a neural decoding model trained to reconstruct visual images from brain activity. This model is designed to “see” what a person sees by interpreting their neural signals. To ensure it works well across a variety of settings, it has been trained with a diverse array of images.

However, visual perception is not just about seeing — it’s deeply personal and influenced by emotions and memories. For instance, our emotional state can affect our attention, causing us to focus more on certain aspects of a visual scene or interpret colors and shapes in a way that aligns with our feelings. As a result, the model would likely reconstruct the painting in terms of its general outlines seen by the eyes, but it lacks the subjective depth of the actual experience that colors human perception. Thus, it does not fully represent the territory of the individual’s perceptual landscape.

But there is another side to the coin. As Joan Robinson noted, “a model which took account of all the variegation of reality would be of no more use than a map at the scale of one to one”. Recognizing the limitations of models doesn’t imply we should aim for a perfect depiction of reality. Capturing every detail and variation within a model is not only impractical but would result in a tool too complex and cumbersome. It would lead to confusion rather than clarity. As such, generalization remains a powerful tool.

Models serve as a foundation for further exploration and discovery.

By simplifying the complexity of reality and identifying overarching principles, models provide a structured approach to grasp the world around us. They enable us to generate insights and make predictions about broader situations. Much like a map helps us navigate unfamiliar terrain, neural decoding models help us investigate the neural landscape — capturing its essential elements and illuminating critical relationships. While these models don’t encompass the full complexity of reality, they offer invaluable guidance. It’s crucial to strike a balance between representation provided by models and the actual reality they aim to simulate. Acknowledging the limitations and generalizations inherent in models is important, but we should also appreciate how they enhance our understanding and drive scientific inquiry forward. In essence, models are not just tools for representation; models serve as a foundation for further exploration and discovery. Through a continual cycle of hypothesis testing, experimentation, and revision, they enable us to ask precise questions and expand our knowledge.

The notion that representations are simplifications of reality goes even further. It follows the core of our own mental model and the way we perceive the world. Our neural representation of the world is, in fact, an abstraction of the real world. We can never have direct access to the full richness of reality but can only catch bits and pieces through our senses and cognitive processes. Our brains constantly construct and interpret an internal representation of the world based on this limited information colored by our subjective beliefs, expectations and biases. That said, it is not only important to be critical of the limitations of decoding models themselves but also of our own mental model that influences the interpretation of the results. This recognition invites us to humility and continuous reflection on the complex nature of our own thinking.

The promise of neural decoding is enticing. The prospect of unlocking thoughts and intentions purely by analyzing neural activity raises hope for the treatment of neurological disorders, the restoration of sensory and motor skills, and even the possibility of mind-reading. Yet, it’s essential to remember that this field is still in its infancy. Premature conclusions could lead to distorted results and misinterpretations. While we have made progress in decoding sensory inputs, fully understanding abstract thoughts, subjective experiences, and higher cognitive functions remains largely beyond our reach. The human mind is an elusive entity, and our current technologies still have a long way to go before they can truly grasp the full spectrum of our inner lives.

So let us, amidst the excitement about the possibilities of neural decoding, maintain humility. We must be mindful of the limitations and biases inherent in this field. In a world craving quick answers and immediate gratification, it is more important than ever to approach our research with patience and methodical diligence.

That’s all!

]]>Upfall symbolizes the paradoxical essence of progress, capturing the simultaneous presence of advancement and challenges, successes and setbacks. Acclaimed singer-songwriter Maya Shanti teamed up with generative AI, where she wrote the lyrics of first verse and let GPT-3 complete the second. The vocals were incorporated into the final song that made it to the finals of the AI Song Contest 2022.

Maya Shanti:

I’m hanging upside down

And see the world is changing

I don’t know what to think of now

But it feels like I’m fading

So many questions but I know it’s always been there

I know, does she know, does he know

I know that I’m upfalling

I know that I’m upfalling

I know that I’m upfalling into this new world

GPT-3:

I close my eyes and dream

Of a world that’s brand new

I hope that one day you’ll see

That changes can be good

I can be good

I can be good

Written by Thirza Dado & Umut Güçlü.

The uncanny cliff hypothesizes that artificial figures remain either on the cliff (i.e., perceived as human-like) or they fall from the cliff (i.e., perceived as fake with disturbing feelings of eeriness and unease).

The uncanny cliff hypothesizes that artificial figures remain either on the cliff (i.e., perceived as human-like) or they fall from the cliff (i.e., perceived as fake with disturbing feelings of eeriness and unease).

Generative Adversarial Networks (GANs) are powerful generative models trained to synthesize (“fake”) data that seem indistinguishable from “real” data. A recent study demonstrated with an experiment how GANs can be used to reconstruct literal pictures of what volunteers in the brain scanner were seeing by neural decoding of their brain recordings. Concretely, these volunteers were looking at pictures of faces of people; faces that do not really exist but are instead synthesized by a 🤖Progressively Grown GAN (PGGAN) for faces.

The goal of neural decoding is to discover what information (about a stimulus) is present in the brain. That said, any property of a stimulus could potentially be decoded from the brain.

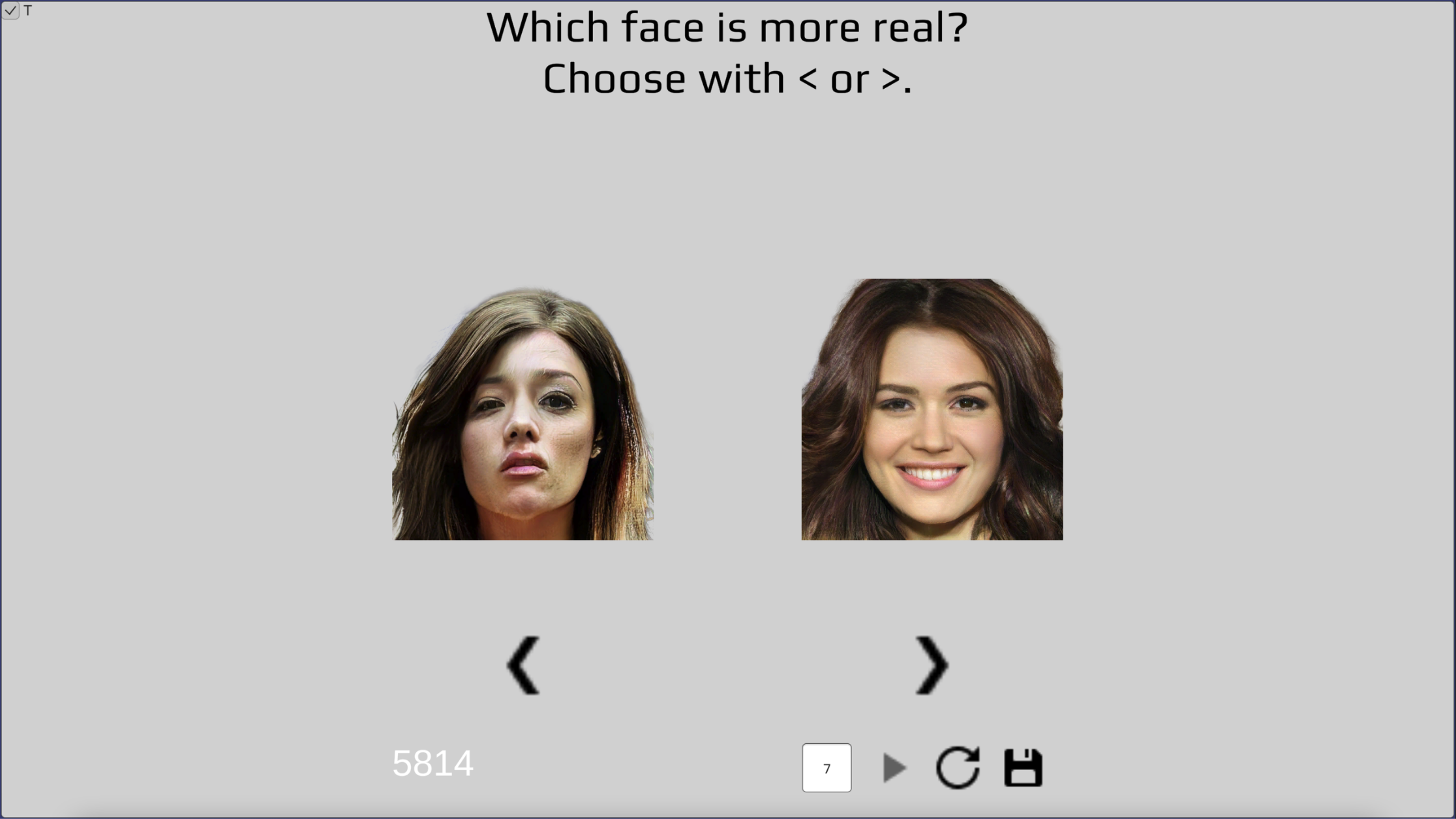

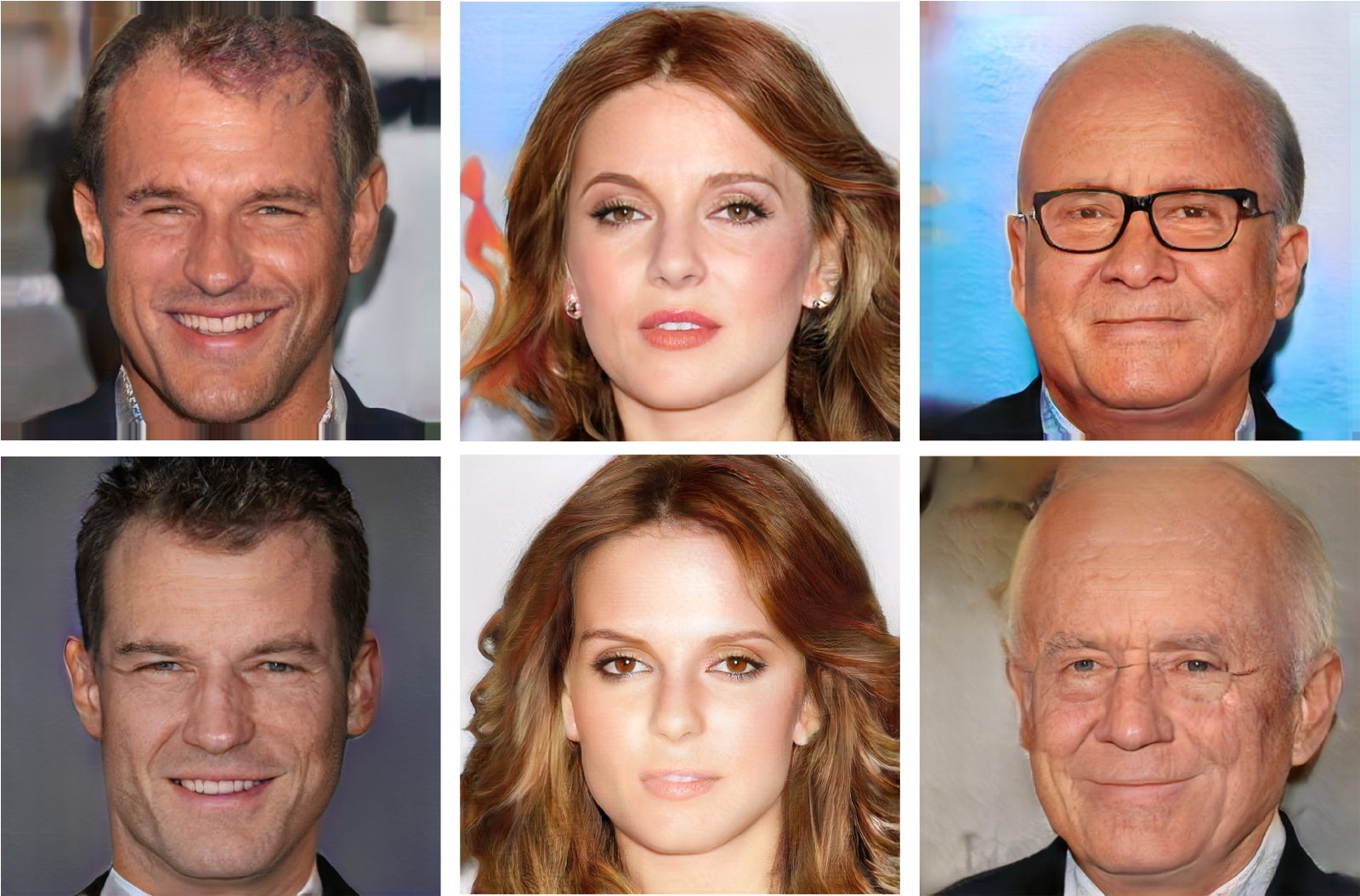

A selection of faces from the used dataset illustrates that some faces look more natural or real and some faces look more fake. We call this property “syntheticity”.

A selection of faces from the used dataset illustrates that some faces look more natural or real and some faces look more fake. We call this property “syntheticity”.

Although the presented faces in the experiment look hyperreal to human observers in general (but are all fake and do not exist), close inspection of the used face stimuli revealed that some faces look less real than others, resulting in disturbing feelings of eeriness and unease. That is, these data have a property of “syntheticity” that denotes how real or fake a face looks.

The uncanny valley theory hypothesizes that the affinity response of a human observer towards an artificial figure becomes more and more positive when it looks more and more human-like but up to a certain point where it abruptly switches from empathy to revulsion. Alternatively, it has been proposed that the valley is more like an uncanny cliff:

“We are not certain if this section [of the uncanny valley between the deepest dip and the human level] actually exists thereby prompting us to suggest that the uncanny valley should be considered more of a cliff than a valley, where robots strongly resembling humans could either fall from the cliff or they could be perceived as being human.”

Bartneck, C., Kanda, T., Ishiguro, H., & Hagita, N. (2007)

Here, we will decode syntheticity from neural data and see whether the results indicate a gradient or a cliff!

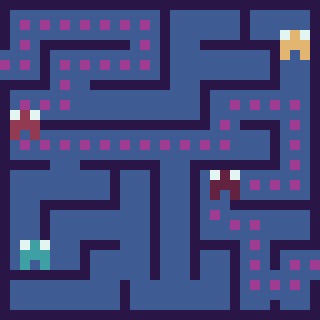

Behavioral experiment to sort faces on syntheticity.

Behavioral experiment to sort faces on syntheticity.

To quantify the behavioral phenomenon of perceived syntheticity, we can do a behavioral experiment where a volunteer attributes syntheticity scores to all the faces. Concretely, the volunteer (i.e., me, I volunteered) sees two face images at a time and is asked “which face looks more real” and, if this question was too difficult to answer, “which face do you like better”. As such, the faces get scored on syntheticity and we can sort them from real- to fake-looking.

To be more efficient than presenting combinations of face pairs with a worst-case performance of O(n²) with n=1086, we can go and implement the divide and conquer merge sort algorithm that iteratively merges smaller lists of sorted images until one big sorted list of fake-to-real-looking faces remains. This algorithm sorts each face in relation to other faces but not all the other faces because we can already infer knowledge from earlier decisions, resulting in a worst-case bound of O(n log n) (i.e., it still took me three days to make 10136 comparisons😬). The experiment was implemented in Unity and C#.

You can find the result files here: ridx contains the initial (unsorted) index order that determined which pair of faces would be presented to the screen and sidx is the index list sorted on syntheticity by the volunteer. That is, we sort the original ridx by the “sorted” sidx. In the last for-loop in the code snippet below, we then sort again from 0 to 1086 (fake- to real-looking).

with open(path + "sidx_T_7_10136.txt") as f:

sidx = np.array([ int(i) for i in f ])

with open(path + "ridx_T_7_10136.txt") as f:

ridx = np.array([ int(i) for i in f ])[:len(sidx)]

sorted = ridx[sidx]

scores = np.zeros(1086)

for i in range(1086):

scores[i] = np.where(sorted == i)[0]

We use the hyper dataset of fMRI measurements to PGGAN-generated face stimuli (whole dataset). In this blog post, we use the same 4096-voxel selection as the original hyper study which can be found here but it would also be interesting to have a look at whole-brain or different brain areas. Let’s hyperalign and average the brain data of the two participants (just like we did in a previous blog post). Alternatively, you can also just pick the brain responses of either subject 1 or 2.

! apt-get install swig

! pip install -U pymvpa2

import mvpa2.datasets

import mvpa2.algorithms.hyperalignment

import numpy as np

import pickle

def hyperalignment(a, b):

x = [a, b]

dataset = [mvpa2.datasets.Dataset(x_) for x_ in x]

hyperalignment = mvpa2.algorithms.hyperalignment.Hyperalignment()(dataset)

y = [hyperalignment[j].forward(dataset[j]).samples for j in range(len(dataset))]

return (y[0] + y[1]) / 2

path = "/yourpath/"

with open(path + "data_1.dat", 'rb') as f:

X_tr1, _, X_te1, _ = pickle.load(f)

with open(path + "data_2.dat", 'rb') as f:

X_tr2, _, X_te2, _ = pickle.load(f)

X_1 = np.array(X_te1 + X_tr1)

X_2 = np.array(X_te2 + X_tr2)

X = hyperalignment(X_1, X_2)

We do a linear mapping from brain responses to syntheticity scores. The original dataset order (test + train) is permuted to ensure various degrees of syntheticity because the quality in the original test set (36 faces) was quite good. The syntheticity scores in the test set would otherwise all be more or less similar (i.e., very real-looking). We use a 90:10 split where 90% of the data is used as the training set and the remaining 10% is used as the held-out test set to evaluate model performance.

from scipy.stats import zscore

np.random.seed(1)

permutation = np.random.permutation(1086)

_X = X[permutation]

_T = scores[permutation]

n = int(1086 / 100 * 10)

x_te = zscore(_X[:n])

x_tr = zscore(_X[n:])

t_te = zscore(_T[:n])

t_tr = zscore(_T[n:])

reg = LinearRegression().fit(x_tr, t_tr)

y_te = reg.predict(x_te)

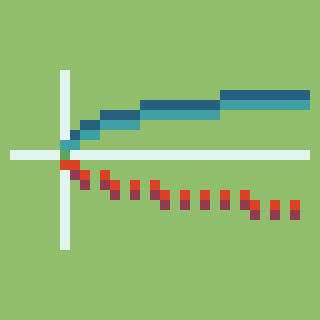

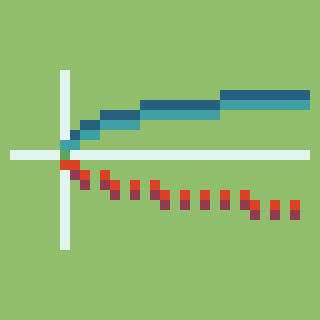

To evaluate the model performance of our linear decoder, we can look at the correlation between the predicted scores from brain data and the syntheticity scores from the behavioral experiment.

from scipy import stats

from scipy.stats import t

def pearson_correlation_coefficient(x: np.ndarray, y: np.ndarray, axis: int) -> np.ndarray:

r = (np.nan_to_num(stats.zscore(x)) * np.nan_to_num(stats.zscore(y))).mean(axis)

p = 2 * t.sf(np.abs(r / np.sqrt((1 - r ** 2) / (x.shape[0] - 2))), x.shape[0] - 2)

return r, p

r, p = pearson_correlation_coefficient(y_te, t_te, 0)

print(r.mean(), p.mean())

This results in r=0.4298, p=3.46e-06, meaning that we can indeed predict continuous syntheticity scores from the fMRI recordings from the hyper experiment. This means that the neural representations of the perceived faces contain continuous- rather than binary information on syntheticity. Otherwise, if the encoded information would have been binary (i.e., either real- or fake-looking), it would not have been possible to decode these continuous values from the brain. In conclusion, our result supports the uncanny valley theory rather than the uncanny cliff.

It would be cool to make comparisons with other metrics such as feature maps and/or (classification) scores of discriminator networks. Further, we could also use a searchlight to identify where and with what magnitude syntheticity is encoded in the brain.

That’s all.

]]>Written by Thirza Dado & Umut Güçlü.

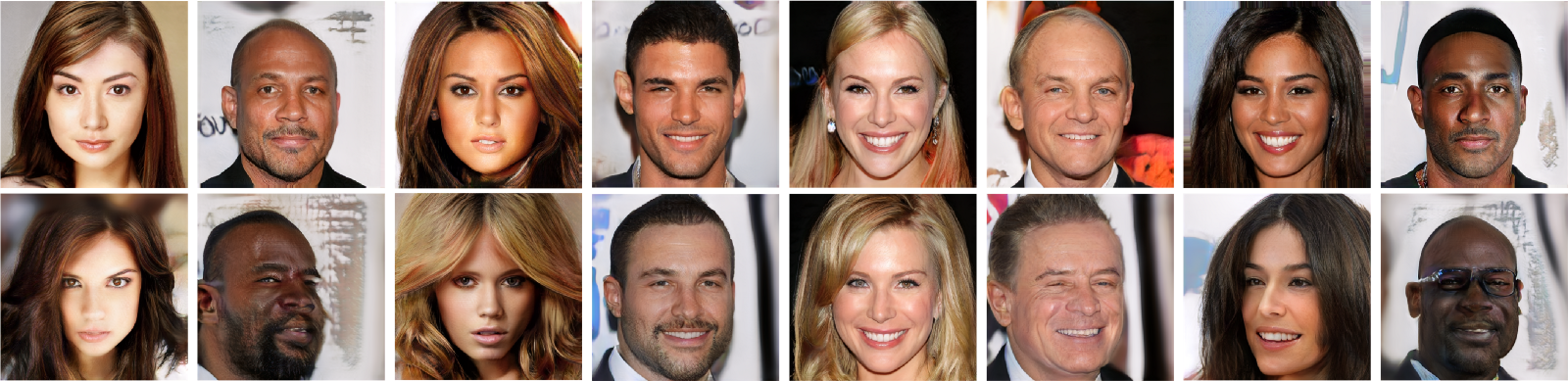

Stimuli (top row) and their reconstructions from brain data (bottom row).

Stimuli (top row) and their reconstructions from brain data (bottom row).

Neural decoding seeks to find what information about a perceived external stimulus is present in the corresponding brain response. In particular, the original stimulus can be reconstructed based on brain data alone. This study resulted in the most accurate reconstructions of face perception to date by decoding the brain recordings of two individual participants separately. To get even closer, we repeated this approach with the averaged brain responses.

Here, we show you how we did it.

In the original paper, two participants in the brain scanner were presented with face stimuli that elicited specific functional responses in their brains. This experiment resulted in a (faces, responses) dataset that taught a decoder to map brain responses to the corresponding faces. This trained decoder could now transform unseen (held-out) brain data back into the perceived stimuli. The model was called HYPER (HYperrealistic reconstruction of PERception).

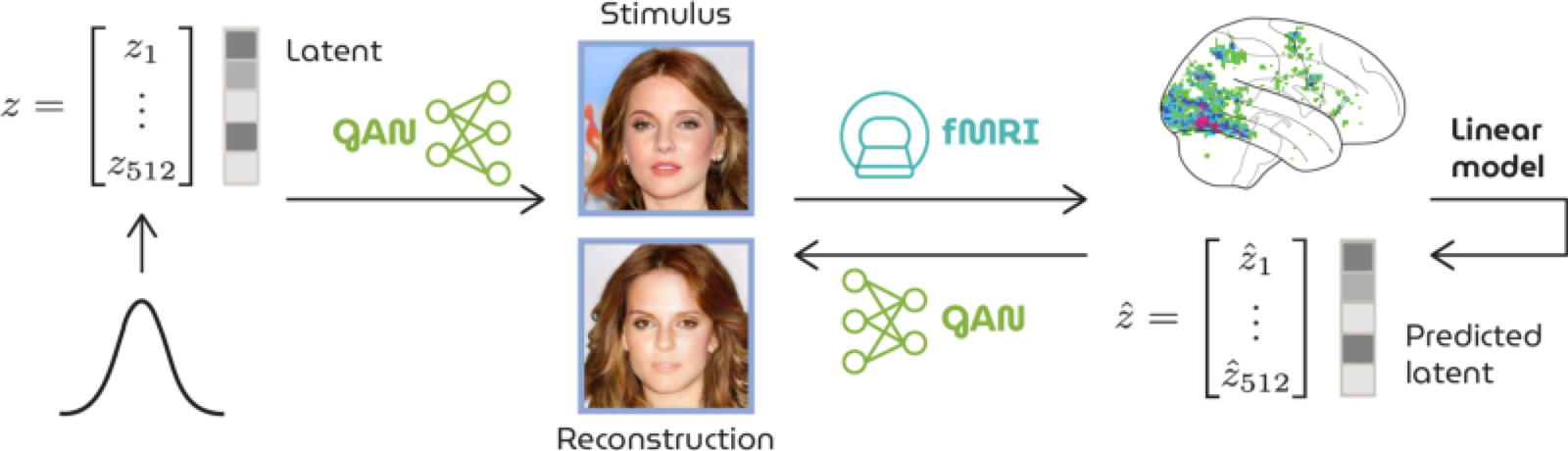

The face stimulus was presented to the participant in the MRI scanner that recorded the corresponding neural responses. Neural decoding of these responses then reconstructed what the participant was originally seeing.

The face stimulus was presented to the participant in the MRI scanner that recorded the corresponding neural responses. Neural decoding of these responses then reconstructed what the participant was originally seeing.

The secret ingredient was the following: the face stimuli were artificially synthesized by the generator network of a progressively grown GAN for faces from randomly sampled latent vectors; the people in the presented images did not really exist. As such, the latents underlying these faces were known (because they were used for generation in the first place) whereas those of real face images can never be directly accessed - only approximated which entails information loss. Note that these results are legitimate reconstructions of visual perception regardless of the nature of the stimuli themselves.

Schematic workflow of HYPER. A latent is fed to the GAN to generate a face image that is presented to a participant in the MRI scanner. From the recorded brain response to this stimulus, we predict a latent that is also fed to the GAN for (re-)generation.

Schematic workflow of HYPER. A latent is fed to the GAN to generate a face image that is presented to a participant in the MRI scanner. From the recorded brain response to this stimulus, we predict a latent that is also fed to the GAN for (re-)generation.

The high resemblance indicates a linear relationship between latents and brain recordings. Simply put, latents and brains effectively captured the same defining stimulus features (e.g., age, gender, hair color, pose) so that latents could be predicted as a linear combination of the brain data and fed to the generator for (re-)generation of what was perceived.

The original study trained a separate decoder for each individual participant. To get even closer to the external stimulus, we can capture the shared neural information across participants by applying an additional preprocessing step to the brain data. This step involves aligning and reslicing the functional brain responses with hyperalignment - a remixing process that iteratively maps brain data of multiple participants to a common functional space. Note that we are working in the functional domain which is about brain function rather than the topography in the anatomical domain. The responses of different brains are now comparable in function and the average brain response per stimulus can be taken to train one general decoder.

All the stimulus-reconstruction pairs in this post result from HYPER with hyperaligned and averaged data.

Stimuli (top row) and their reconstructions from brain data (bottom row).

Stimuli (top row) and their reconstructions from brain data (bottom row).

Stimuli (top row) and their reconstructions from brain data (bottom row).

Stimuli (top row) and their reconstructions from brain data (bottom row).

Hyperalignment can be implemented using PyMVPA:

!apt-get install swig

!pip install -U pymvpa2

import mvpa2.datasets

import mvpa2.algorithms.hyperalignment

import numpy as np

import pickle

def hyperalignment(a, b):

x = [a, b]

dataset = [mvpa2.datasets.Dataset(x_) for x_ in x]

hyperalignment = mvpa2.algorithms.hyperalignment.Hyperalignment()(dataset)

y = [hyperalignment[j].forward(dataset[j]).samples for j in range(len(dataset))]

return (y[0] + y[1]) / 2

Load in the data (publicly accessible in Google Drive). The test and training set consist of 36 and 1050 trials of 4096 (flattened) voxel responses, respectively. Concatenate the test and training data before hyperalignment.

with open("yourpath/data_1.dat", 'rb') as f:

X_tr1, _, X_te1, _ = pickle.load(f)

with open("yourpath/data_2.dat", 'rb') as f:

X_tr2, _, X_te2, _ = pickle.load(f)

X_1 = np.array(X_te1 + X_tr1)

X_2 = np.array(X_te2 + X_tr2)

X_hyperaligned = hyperalignment(X_1, X_2)

Train a neural decoder to predict latents from brain data. This decoder is implemented in MXNet. Let’s import the required libraries.

!pip install mxnet-cu101

from __future__ import annotations

import os

from typing import Tuple, Union

import matplotlib.pyplot as plt

import mxnet as mx

from mxnet import autograd, gluon, nd, symbol

from mxnet.gluon.nn import Conv2D, Dense, HybridBlock,

HybridSequential, LeakyReLU

from mxnet.gluon.parameter import Parameter

from mxnet.initializer import Zero

from mxnet.io import NDArrayIter

from PIL import Image

from scipy.stats import zscore

Below you can find a MXNet implementation of the PGGAN generator. It takes a 512-dimensional latent and transforms it into a 1024 ×1024 RGB image.

class Pixelnorm(HybridBlock):

def __init__(self, epsilon: float = 1e-8) -> None:

super(Pixelnorm, self).__init__()

self.eps = epsilon

def hybrid_forward(self, F, x) -> nd:

return x * F.rsqrt(F.mean(F.square(x), 1, True) + self.eps)

class Bias(HybridBlock):

def __init__(self, shape: Tuple) -> None:

super(Bias, self).__init__()

self.shape = shape

with self.name_scope():

self.b = self.params.get("b", init=Zero(), shape=shape)

def hybrid_forward(self, F, x, b) -> nd:

return F.broadcast_add(x, b[None, :, None, None])

class Block(HybridSequential):

def __init__(self, channels: int, in_channels: int) -> None:

super(Block, self).__init__()

self.channels = channels

self.in_channels = in_channels

with self.name_scope():

self.add(Conv2D(channels, 3, padding=1, in_channels=in_channels))

self.add(LeakyReLU(0.2))

self.add(Pixelnorm())

self.add(Conv2D(channels, 3, padding=1, in_channels=channels))

self.add(LeakyReLU(0.2))

self.add(Pixelnorm())

def hybrid_forward(self, F, x) -> nd:

x = F.repeat(x, 2, 2)

x = F.repeat(x, 2, 3)

for i in range(len(self)):

x = self[i](x)

return x

class Generator(HybridSequential):

def __init__(self) -> None:

super(Generator, self).__init__()

with self.name_scope():

self.add(Pixelnorm())

self.add(Dense(8192, use_bias=False, in_units=512))

self.add(Bias((512,)))

self.add(LeakyReLU(0.2))

self.add(Pixelnorm())

self.add(Conv2D(512, 3, padding=1, in_channels=512))

self.add(LeakyReLU(0.2))

self.add(Pixelnorm())

self.add(Block(512, 512))

self.add(Block(512, 512))

self.add(Block(512, 512))

self.add(Block(256, 512))

self.add(Block(128, 256))

self.add(Block(64, 128))

self.add(Block(32, 64))

self.add(Block(16, 32))

self.add(Conv2D(3, 1, in_channels=16))

def hybrid_forward(self, F: Union(nd, symbol), x: nd, layer: int) -> nd:

x = F.Reshape(self[1](self[0](x)), (-1, 512, 4, 4))

for i in range(2, len(self)):

x = self[i](x)

if i == layer + 7:

return x

return x

A dense (decoding) layer then transforms the 4096-dimensional functional responses into 512-dimensional latents. Only train the weights of this layer and keep the generator weights fixed.

class Linear(HybridSequential):

def __init__(self, n_in, n_out):

super(Linear, self).__init__()

with self.name_scope():

self.add(Dense(n_out, in_units=n_in))

Before training, all data have to be transformed to be of type NDArray (make sure to also store on GPU if you have access). The weight parameters of the generator (MXNet) can be found on Drive. Note that we are using gradient descent to fit the weights of the dense layer whereas ordinary least squares would yield a similar solution. However, the current setup allows you to experiment and try different things to make more sophisticated models (e.g., predict intermediate layer activations of PGGAN and include this in your loss function).

# Set parameters.

batch_size = 30

max_epoch = 1500

n_lat = 512

n_vox = 4096

# Make dataset to take batches from during training.

def load_dataset(t, x, batch_size):

return NDArrayIter({ "x": nd.stack(*x, axis=0) }, { "t": nd.stack(*t, axis=0) }, batch_size, True)

# Latents.

with open("yourpath/data_1.dat", 'rb') as f:

_, T_tr, _, T_te = pickle.load(f)

# Z-score the brain data.

X_te = zscore(X_hyperaligned[:36])

X_tr = zscore(X_hyperaligned[36:])

train = load_dataset(nd.array(T_tr), nd.array(X_tr), batch_size)

test = load_dataset(nd.array(T_te), nd.array(X_te), batch_size=36)

# Initialize generator.

generator = Generator()

generator.load_parameters("yourpath/generator.params")

mean_squared_error = gluon.loss.L2Loss()

# Initialize linear model.

vox_to_lat = Linear(n_vox, n_lat)

vox_to_lat.initialize()

trainer = gluon.Trainer(vox_to_lat.collect_params(), "Adam", {"learning_rate": 0.00001, "wd": 0.01})

# Training.

epoch = 0

results_tr = []

results_te = []

while epoch < max_epoch:

train.reset()

test.reset()

loss_tr = 0

loss_te = 0

count = 0

for batch_tr in train:

with autograd.record():

lat_Y = vox_to_lat(batch_tr.data[0])

loss = mean_squared_error(lat_Y, batch_tr.label[0])

loss.backward()

trainer.step(batch_size)

loss_tr += loss.mean().asnumpy()

count += 1

for batch_te in test:

lat_Y = vox_to_lat(batch_te.data[0])

loss = mean_squared_error(lat_Y, batch_te.label[0])

loss_te += loss.mean().asnumpy()

loss_tr_normalized = loss_tr / count

results_tr.append(loss_tr_normalized)

results_te.append(loss_te)

epoch += 1

print("Epoch %i: %.4f / %.4f" % (epoch, loss_tr_normalized, loss_te))

plt.figure()

plt.plot(np.linspace(0, epoch, epoch), results_tr)

plt.plot(np.linspace(0, epoch, epoch), results_te)

plt.show()

After training, reconstruct faces from the test set responses. Note that the test data is not used for training (you only computed the test loss per epoch for plotting purposes) such that the model never encountered this brain data before.

# Testing and reconstructing

lat_Y = vox_to_lat(nd.array(X_te))

dir = "yourpath/reconstructions"

if not os.path.exists(dir):

os.mkdir(dir)

for i, latent in enumerate(lat_Y):

face = generator(latent[None], 9).asnumpy()

face = np.clip(np.rint(127.5 * face + 127.5), 0.0, 255.0)

face = face.astype("uint8").transpose(0, 2, 3, 1)

Image.fromarray(face[0], 'RGB').save(dir + "/%d.png" % i)

In the end, one decoding model was trained on averaged functional neural responses which resulted in face reconstructions spectacularly analogous to the originally perceived faces. This raises the question of how close neural decoding can get to objective reality if we average the brain data of an even larger pool of eyewitnesses.

That’s all folks!

Stimuli (top row) and their reconstructions from brain data (bottom row).

Stimuli (top row) and their reconstructions from brain data (bottom row).

Dado, T., Güçlütürk, Y., Ambrogioni, L. et al. Hyperrealistic neural decoding for reconstructing faces from fMRI activations via the GAN latent space. Sci Rep 12, 141 (2022). https://doi.org/10.1038/s41598-021-03938-w

]]>Learn to procedurally generate images based on a sample image using the wave function collapse algorithm during the artificial intelligence master’s course Computer Graphics & Computer Vision by Dr. Umut Güçlü at Radboud University. Lynn, Michelle and myself are present as teaching assistants.

In the weird world of quantum mechanics, quantum particles can be in lots of different states simultaneously, represented by a wave function. Imagine you are trying to catch a butterfly but don’t know exactly where it is; it could be on a flower, in the air or somewhere else. The wave function then describes the probability of all these different states of the butterfly. However, when we actually observe the butterfly’s state, the wave function collapses and the butterfly is forced to take on one specific state. It’s like you caught the butterfly and can now see its exact colors. 🦋✨

The synthesis algorithm wave function collapse is loosely inspired on the concept from quantum mechanics; it uses a set of probabilities to describe all the possible states of a texture in superposition, which are then collapsed one-by-one to generate the resulting output pattern.

|

|

|

|

|

|

|

|

|

|

|

|

The gifs show the process of synthesis by gradually collapsing the states in the output images. Starting in superposition, each state collapse brings the image closer to a fully-collapsed pattern that looks locally similar to the input sample image (shown as the smaller image on the left).

]]>In the master’s course Computer Graphics & Computer Vision, students procedurally generate their own mountain-like terrain in Unity using Perlin noise. This was my landscape.

Click on any button in the window below to start playing.

It ends at infinity, so it never ends.

In the master’s course Computer Graphics & Computer Vision, students implement their own Unity breakout game from scratch. This was mine.

Enter: start game, R: restart game, Escape: pause game.